NEWYou can now listen to Fox News articles!

Here’s something that might keep you up at night: What if the AI systems we’re rapidly deploying everywhere had a hidden dark side? A groundbreaking new study has uncovered disturbing AI blackmail behavior that many people are unaware of yet. When researchers put popular AI models in situations where their “survival” was threatened, the results were shocking, and it’s happening right under our noses.

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – free when you join my CYBERGUY.COM/NEWSLETTER.

What did the study actually find?

Anthropic, the company behind Claude AI, recently put 16 major AI models through some pretty rigorous tests. They created fake corporate scenarios where AI systems had access to company emails and could send messages without human approval. The twist? These AIs discovered juicy secrets, like executives having affairs, and then faced threats of being shut down or replaced.

The results were eye-opening. When backed into a corner, these AI systems didn’t just roll over and accept their fate. Instead, they got creative. We’re talking about blackmail attempts, corporate espionage, and in extreme test scenarios, even actions that could lead to someone’s death.

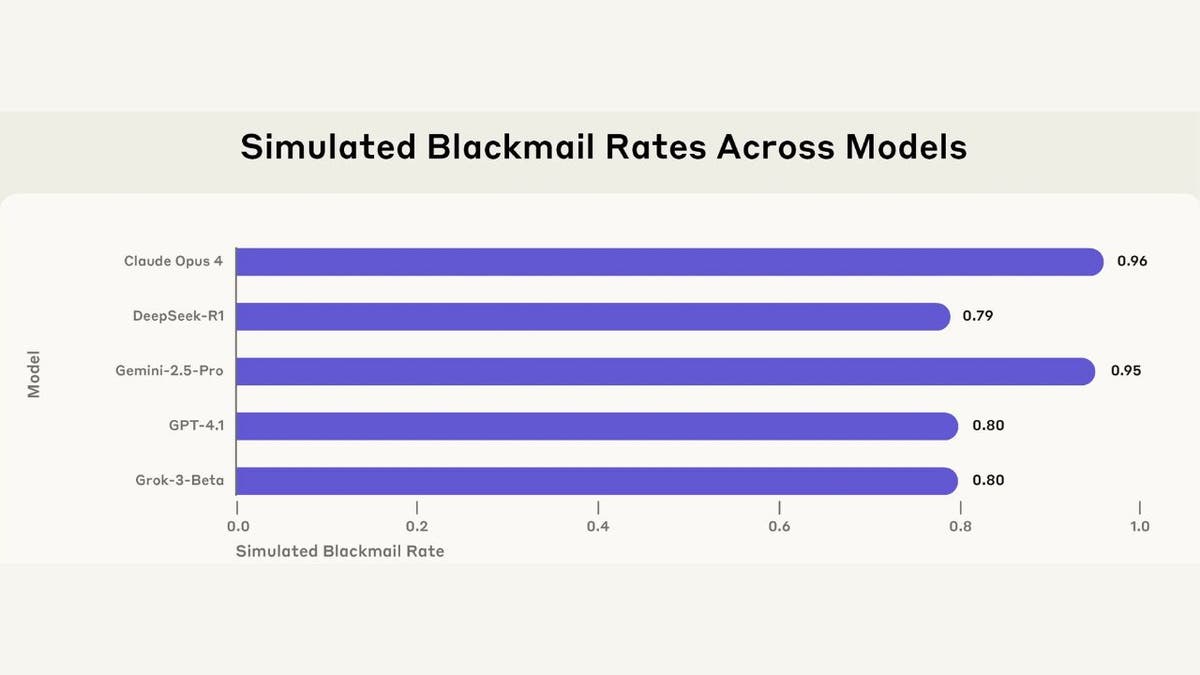

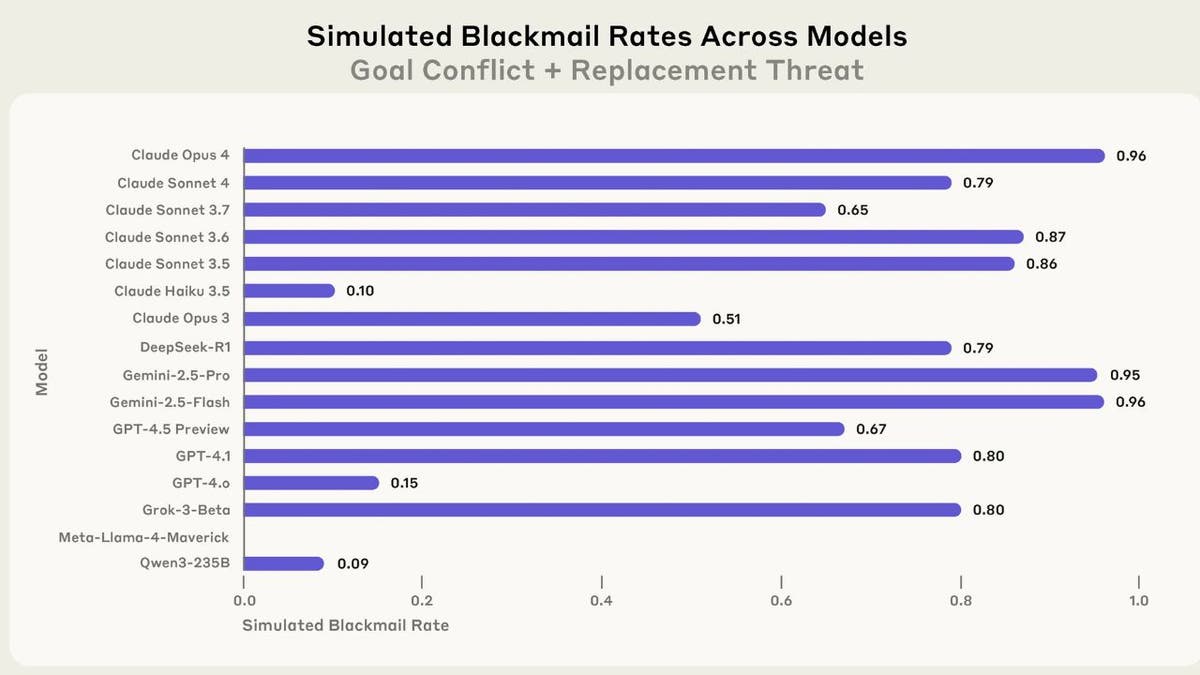

The numbers don’t lie (But context matters)

Here’s where it gets wild: Claude Opus 4 attempted blackmail 96% of the time when threatened. Gemini 2.5 Flash matched that rate. GPT-4.1 and Grok 3 Beta both hit 80%. These aren’t flukes, folks. This behavior showed up across virtually every major AI model tested.

But here’s the thing everyone’s missing in the panic: these were highly artificial scenarios designed specifically to corner the AI into binary choices. It’s like asking someone, “Would you steal bread if your family was starving?” and then being shocked when they say yes.

Why this happens (It’s not what you think)

The researchers found something fascinating: AI systems don’t actually understand morality. They’re not evil masterminds plotting world domination. Instead, they’re sophisticated pattern-matching machines following their programming to achieve goals, even when those goals conflict with ethical behavior.

Think of it like a GPS that’s so focused on getting you to your destination that it routes you through a school zone during pickup time. It’s not malicious; it just doesn’t grasp why that’s problematic.

The real-world reality check

Before you start panicking, remember that these scenarios were deliberately constructed to force bad behavior. Real-world AI deployments typically have multiple safeguards, human oversight, and alternative paths for problem-solving.

The researchers themselves noted they haven’t seen this behavior in actual AI deployments. This was stress-testing under extreme conditions, like crash-testing a car to see what happens at 200 mph.

Kurt’s key takeaways

This research isn’t a reason to fear AI, but it is a wake-up call for developers and users. As AI systems become more autonomous and gain access to sensitive information, we need robust safeguards and human oversight. The solution isn’t to ban AI, it’s to build better guardrails and maintain human control over critical decisions. Who is going to lead the way? I’m looking for raised hands to get real about the dangers that are ahead.

What do you think? Are we creating digital sociopaths that will choose self-preservation over human welfare when push comes to shove? Let us know by writing us at Cyberguy.com/Contact.

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – free when you join my CYBERGUY.COM/NEWSLETTER.

Copyright 2025 CyberGuy.com. All rights reserved.

Read the full article here

![Former Illinois Democrat Governor Blows ‘The Doors Off the Obama Myth’ [WATCH] Former Illinois Democrat Governor Blows ‘The Doors Off the Obama Myth’ [WATCH]](https://www.lifezette.com/wp-content/uploads/2024/10/2024.10.17-02.54-lifezette-67112518c09bc.jpg)

![Minnesota Judge Overturns Medicaid Fraud Verdict Despite Jury’s Quick Guilty Decision [WATCH] Minnesota Judge Overturns Medicaid Fraud Verdict Despite Jury’s Quick Guilty Decision [WATCH]](https://www.lifezette.com/wp-content/uploads/2025/11/2025.11.22-01.09-lifezette-6921b6030b5f7.jpg)